Hello,

I was asked by an employer to be able to display 1 million billboards on screen at 60 frames per second. Assuming a best case scenario of 1 texture being reused by all billboards, is this a metric that is acheivable?

If not, do you have any suggestions on more effective solutions for displaying images at this volume on the globe that can be picked? Thank you for your thoughts.

As metrics goes, it sounds like a bad one as well as probably not an achievable one. A better metric would be “with what we’re trying to do, can it be done through the average computer of our average user”.

Basically, I would question why anyone needs to display 1 million billboards, as it stands on its own it makes absolutely no sense, neither from a systems perspective nor a human one. What I always suspect with these things is that you can always trim it down to “what we’re trying to do”, which usually ends up in some actual functional application; display some geospatial information of some context on a globe using a browser through an application of another context." And very often you can ask questions like;

- What info do you want to display? (live data, changing data, full data / part data, selectable data, etc.)

- When do you want to display it? (all the time, at acceptable intervals, at acceptable proximities, at acceptable cluster definitions, distances, etc. [see below])

- What’s the geospatial context to displaying the info (distance to camera, proximity to other pieces of information, importance of selections or near-objects, etc.)

Very often a paradigm like “1 million billboards” have an answer that is a filtered, sorted, interfaced, systematized and optimized answer that’s a far better metric.

Cheers,

Alex

2 Likes

Hey Alexander,

Thank you for the detailed response. After reading your comment I went and spoke with the employer and hashed some requirements out that answered your questions.

It looks like 1 million billboards being displayed on the globe at any given time is not strictly required, billboards only need to exist for data points that are being viewed when zoomed in at a relatively low altitude.

The data points will be coming in as live data, with up to 2000 being received per second. It would be best to assume that 2000 billboards will need to be created per second, but I think this is something cesium can handle if you keep the total number of billboards on the viewer below 100k.

So it looks like I will be attempting a filtered solution, based on adding and removing billboards based on what area of the globe we are looking at. Do you think that sounds like a feasible approach?

Hiya,

Sure, sounds reasonable, but as with all these things it always comes down to the spec of the user’s machine, as it all runs in the browser. No GPU or special graphics card or similar will make Cesium seriously slow, etc. Memory consumption, blah, blah.

I would seriously consider not using the Cesium APIs per second, but do a quick calculation per incoming point as a distance to camera before pushing it through in a clustered entityCollection. Slap some display rules (also distance-based) on there to not show points too far away (you can calculate the width of the camera view to the distance between things as well) and only show points within 50% of that width in distance, for example. There’s many more ways of optimizing things, but it’s a good starting point.

Let us know how you go!

Cheers,

Alex

2 Likes

Hey Alex,

Thanks again for the tip. filtering based on the camera location seems to have really improved performance! Here is a working example. You’ll notice the billboards only begin rendering when you reach a certain height threshold:

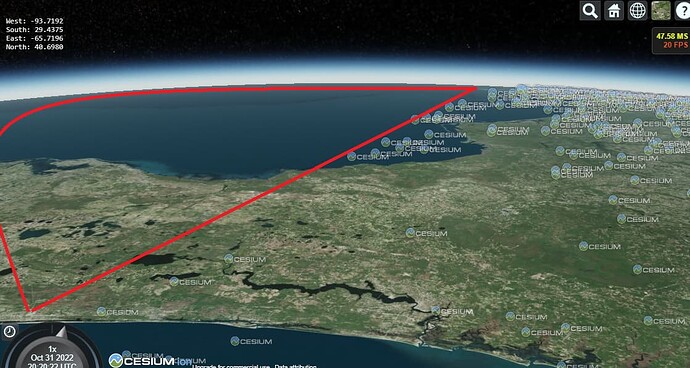

However, I am running into an issue I am having a hard time solving. If you zoom in and pitch the camera to view the horizon, you will notice that if you look around, the rectangle seems to not encompass one of the four cardinal directions (I’ve noticed it happening when looking South East). Here’s an example showing what I mean, where you can see the cutoff line of the rectangle:

It is much worse if you keep looking left, but I wanted to be sure to include the cutoff line.

Fortunately I have found a way to calculate the horizon in different way that doesn’t have this problem, as you can see here:

However, this has the opposite problem that the horizon is too wide, and when I try placing billboards inside it, I hit performance problems because too many are being rendered.

Do you know of a way I can shrink the size of the rectangle in the second example so it covers a smaller distance? Say, by a factor of 1/2 as an arbitrary amount? I’ve tried using the subsection method in the rectangle API, Rectangle - Cesium Documentation but I could not figure out how to use it, or if I am using it for its intende purpose.

Thank you for any advice you can offer!

Actually after doing more testing, I found a simple solution to shrink the rectangle by using the center:

const rect = Cesium.Rectangle.fromCartesianArray([

entityW._position._value,

entityS._position._value,

entityE._position._value,

entityN._position._value,

]);

const rectCenter = Cesium.Cartographic.toCartesian(

Cesium.Rectangle.center(rect)

);

const smallWest = new Cesium.Cartesian3(

(rectCenter.x + entityW._position._value.x),

(rectCenter.y + entityW._position._value.y),

(rectCenter.z + entityW._position._value.z)

);

const smallSouth = new Cesium.Cartesian3(

(rectCenter.x + entityS._position._value.x),

(rectCenter.y + entityS._position._value.y),

(rectCenter.z + entityS._position._value.z)

);

const smallEast = new Cesium.Cartesian3(

(rectCenter.x + entityE._position._value.x),

(rectCenter.y + entityE._position._value.y),

(rectCenter.z + entityE._position._value.z)

);

const smallNorth = new Cesium.Cartesian3(

(rectCenter.x + entityN._position._value.x),

(rectCenter.y + entityN._position._value.y),

(rectCenter.z + entityN._position._value.z)

);

const smallerRect = Cesium.Rectangle.fromCartesianArray([

smallWest,

smallSouth,

smallEast,

smallNorth,

]);

it would be nice to be able to make the amount the rectangle shrinks configurable, but that is beyond my math abilities

Thanks for the write-up, looks good. My math is not really good, either. For some of these issues instead of calculating things like a view area and find items within it, you can rely on other who do better math, like the distance rules for billboards and such (which are properties you can read) that look at distance to camera. Cluster is good for this where you alter the size of the clusters based on, say, distance between camera and the globe, which I’ve done with great results.

Cheers,

Alex

Yeah, distanceDisplayCondition, pixelOffsetScaleByDistance, scaleByDistance, translucencyByDistance, but in a combination with cluster. I think show=false on an entity switches off its change detection, but I could be wrong about that. I’m about to write some plugins for entity clustering in the next week or so, so I’ll keep this thread in mind while doing so. I was thinking of a large cluster value based on height above the globe, and it might be really effective with a rectangle selector and filter.

Cheers,

Alex

1 Like

I agree with the general sentiment that rendering a million individual billboards is likely an overkill and the desired behaviour can be achieved in a more effective way. But I was wondering whether it’d be possible to do and worked on a Cesium plugin that does massive marker rendering. It works via points primitive and a shader sampling marker’s image, and it is more performant than Billboards.

Here’s a demo of rendering over a million markers around the globe: Cesium GPU Point Layer Demo

And this is a thread with some info on the plugin: Plugin for massive markers rendering & animation - cesium-gpu-points-layer

I hope someone would find it useful

Lovely piece of work, it’s very interesting. You should add some selection of impossible number of items to render to that, as a million is unusable on my machine, however I’d be very keen to see what 10.000 and 100.000 looks like, and so on, maybe with simpler markings (I often don’t need planes and boats, but simple circles, dots, maybe a map marker, etc, some of might be faster to render?). Next step would also be movement of said markers (real-time? Timed updates?), I’m sure there are fast ways into some of the functionality offered by the full and slower API.

Cheers,

Alex

Thank you, Alex!

It’s possible to select different settings in the top left of the screen. By default, it would render 34833 items (all ships and aircraft scraped from public APIs) at medium graphics quality, neatly within a 10k to 100k interval. It’s possible to “multiply” rendered items. Each copy would be shifted in position. The link I provided earlier is presetting x30 multiplication, so that there are over a million markers.

Try this link (it’s ensuring the default settings): Cesium GPU Point Layer Demo

You can also switch between GPU points and Billboards API rendering to compare performance. Project markers on the globe surface, or keep them always facing the camera, like Billboards. And animations: the default speed is still animating aircraft movement, you just have to zoom it to the max to notice it as it moves at a realistic speed, but it’s much more entertaining to speed up time x1000 and see them move around the globe from afar.

Btw, I noticed that most of the controls are unreachable in portrait orientation on mobile phones, something to be fixed next.

Please let me know if the default settings worked for you and whether switching between Billboards and GPU points shows a performance difference for you. If there is still a performance issue, I’ll add options to render 1-10 thousand markers to allow a wider range of options. And with Billboards vs GPU points performance, I think it’d show a substantial difference if CPU is the bottleneck, but if it’s GPU they’d probably be around the same.

Hiya,

Perfect, I must have missed it the first time around. It seems the “Performance” setting is the one that mostly does it. What does it control? I can do x10 multiplier in low, no problem, but at that rate mid is slow and high is unusable. But I love this kind of demo of what’s possible.

Cheers,

Alex

Nice!

Performance is controlling:

viewer.resolutionScale (devicePixelRatio→1→0.5) - reducing the amount of pixels that need to be calculated), and

viewer.scene.globe.maximumScreenSpaceError (1→2→4) - map tiles/texture quality.